Introduction

I learned to code in Minecraft. Not with mods or plugins, but with mcfunction—the game's built-in command language. It's a bare-bones language with basic arithmetic (+, -, *, /, %), no variables (just "scoreboard" registers), and simple functions for interacting with the game world.

Nevertheless, these limitations provide a great foundation to build more complex things, as long as you get creative (get it). For example, there are no trigonometric functions, so to take the sine between two entities, you start at one entity, face the direction of the other, summon a new entity one block away, and the x/z coordinates are the sine and cosine.

Yeggs Tower Defense

To this day, this is still one of my all time favorite projects. At over 11,000 lines of code, this fully-featured tower defense game has players defend against waves of hostile minecarts using strategically placed towers. I drew inspiration the two tower defense games I loved the most growing up: Kingdom Rush and Bloons Tower Defense. It features six base tower types, each with three upgrade levels leading to two specialization branches with three unique abilities—creating 18 distinct tower variants. Players earn currency by eliminating minecarts and spend it to build or upgrade towers across multiple custom maps.

Newtonian Physics Simulation

I wondered if Minecraft could simulate real physics. Of course, mcfunction has no calculus, no floating-point math, and no exponentials. However, even with calculus, some problems cannot be solved analytically. The solution is to use numerical methods. By discretizing time and replacing derivatives with finite differences, we can iteratively simulate differential equations.

The Newton-Raphson method captures this principle—iteratively improving approximations. For physics, we don't solve equations analytically; we step through time: Δx ≈ v · Δt.

Physics Implementation

The simulations implement gravitational attraction and aerodynamic drag using Euler integration:

For visualization, I use armor stands as particles. They store position data and automatically render in-game, providing built-in position tracking and smooth animation. Each tick, the simulation calculates forces, updates velocity and position, then applies the new position to the armor stand entity.

Challenges

Numerical precision: Minecraft scoreboards store only 32-bit integers. I scaled all values by 1000 (position 5.234 → 5234), giving three decimal places. Rounding errors accumulate, but careful timestep tuning helped with stability.

Collision singularities: When gravitating objects collide, r → 0 and force explodes. To solve this I dampen gravity disabled gravitational interactions at small scales. A collision system instead would be a fun future addition.

The system simulates both projectile motion with air resistance and n-body gravitational dynamics, where multiple objects create emergent orbital phenomena!

DeepMine - Neural Networks in Minecraft

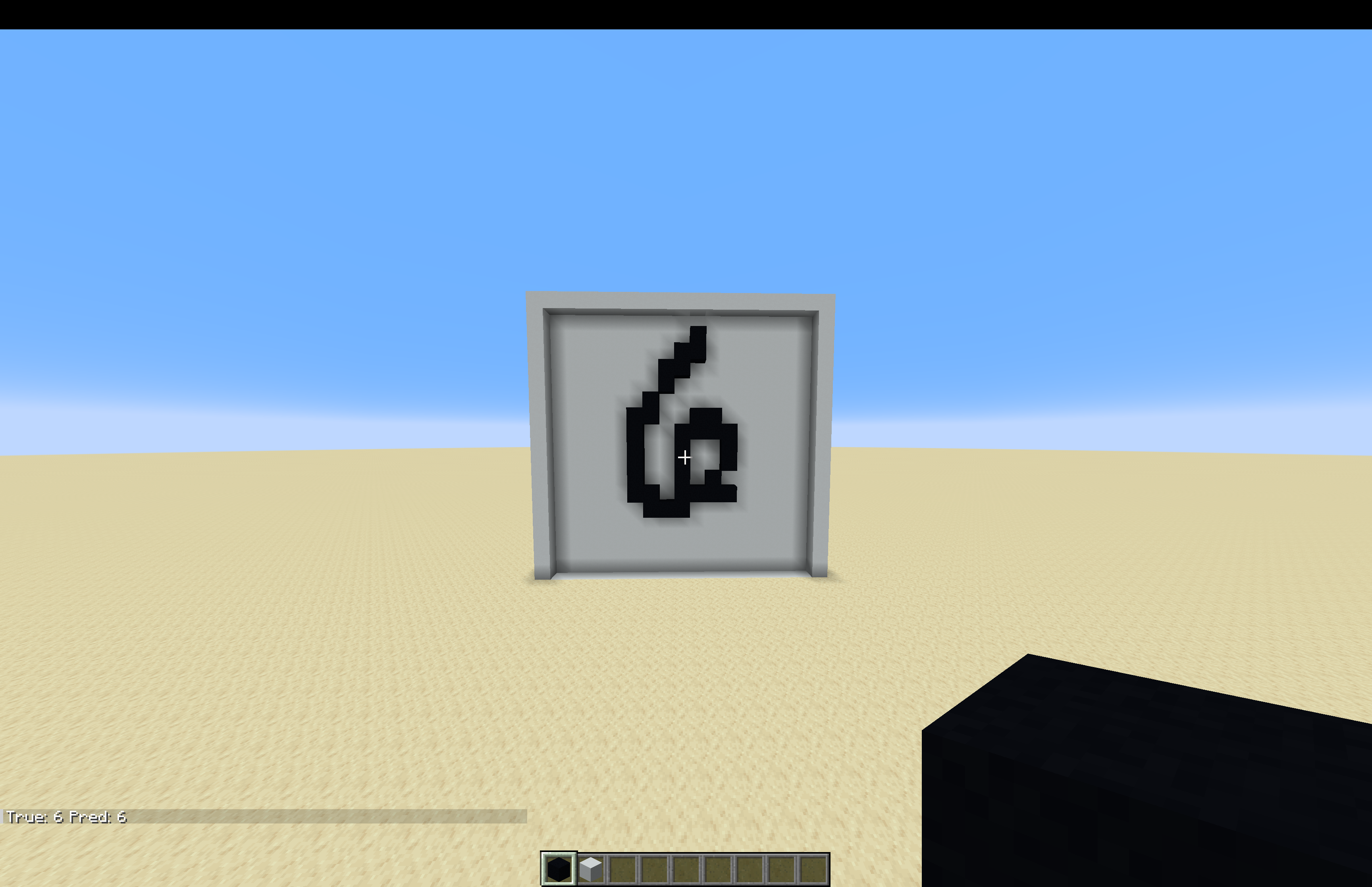

After taking a deep learning class, I wanted to implement a neural network in Minecraft. While the network is trained in Python, it is exported to mcfunction and runs inference entirely in vanilla Minecraft!

Pipeline

Training (Python): A simple MLP (784→32→16→10) trained on MNIST handwritten digits using TensorFlow. Preprocessing to binary values (fully white or black) ensures users can recreate digits with blocks.

Weight Extraction: Since mcfunction only has integers, I scale weights by 10,000 (0.7234 → 7234), preserving four decimal places. A Python script exports these scaled weights as scoreboard initialization commands.

Inference (Minecraft): Three operations implemented in mcfunction:

-

Matrix multiplication: Nested loops compute output[i] = Σ(input[j] × weight[i][j]) + bias[i] using multiply-accumulate operations. Fixed-point multiplication requires rescaling after each layer.

-

ReLU activation: Simple conditional: if neuron < 0, set to 0.

-

Softmax (approximated): Since we only need argmax and exponential is monotonic, skip the exponential entirely and just find the maximum output value.

Users draw digits on a 28×28 block grid, then run the predictor. The network reads the blocks, performs forward propagation through all layers, and displays the predicted digit. Despite crude integer arithmetic and approximated softmax, the system classifies accurately.